This Week In SEO 32

Delayed Penguin, Quality Algorithm Update, Adsense Structured Data, & More

Penguin Update Not Happening This Year

http://searchengineland.com/google-new-penguin-algorithm-update-not-happening-until-next-year-237540

You’ve waited this long, might as well wait a little bit longer!

Google recently announced that they won’t be updating the Penguin algorithm before the start of next year (despite earlier reports that they would be).

Search Engine Land reports:

A Google spokesperson told us today, “With the holidays upon us, it looks like the penguins won’t march until next year.”

The next Penguin update is expected to be real-time, meaning as soon as Google discovers the links to your site, be they bad or good links, the Penguin algorithm will analyze those links in real time, and ranking changes should happen in almost real time.

…probably easier to avoid getting penalized altogether.

Using PageRank for Internal Page Optimization

http://www.stateofdigital.com/using-pagerank-internal-link-optimisation/

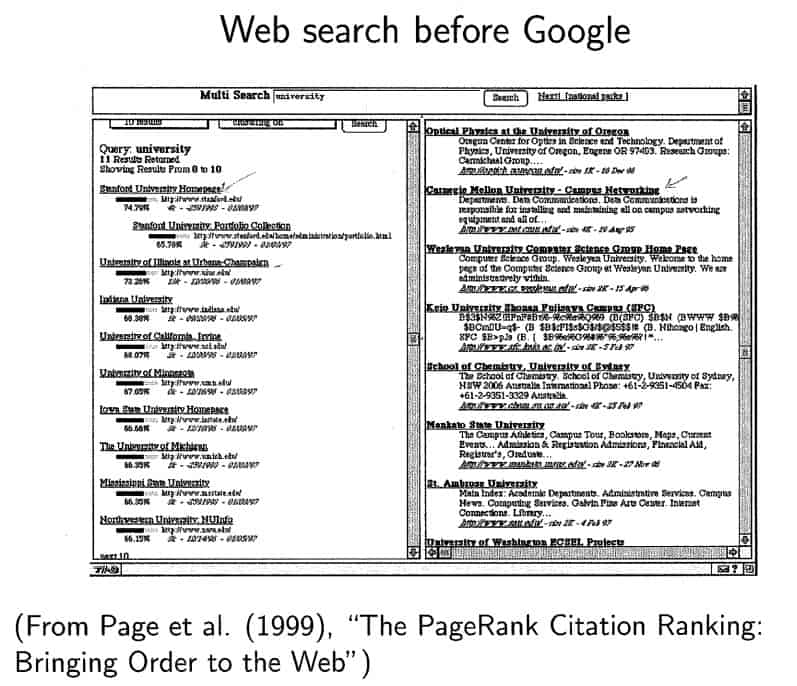

Heads up, this one contains some serious mathematics. State of Digital goes over a brief history of PageRank, and shows you how to use some great tools (like ScreamingFrog) to crawl a site and calculate the PageRank distribution for internal links. If you’re into this kind of thing, def. check out this post.

1. DeepCrawl: this UK based startup is using DeepRank: “DR is a measurement of internal link weight calculated in a similar way to Google’s basic PageRank algorithm. Each internal link is stored and, initially, given the same value. The links are then iterated a number of times, and the sum of all link values pointing to the page is used to calculate their respective DeepRank. This process produces a numerical value between 1 and 10, letting you identify the pages and problems that indicate the greatest impact on your website.”

2. Botify: this French tool actually calculates three metrics per URL for you: Internal Pagerank, Internal Pagerank Position and Raw Internal Pagerank

3. Onpage.org: German tool and now finally available in English is using the traditional concept of PageRank

Does CTR Determine SERP Rank?

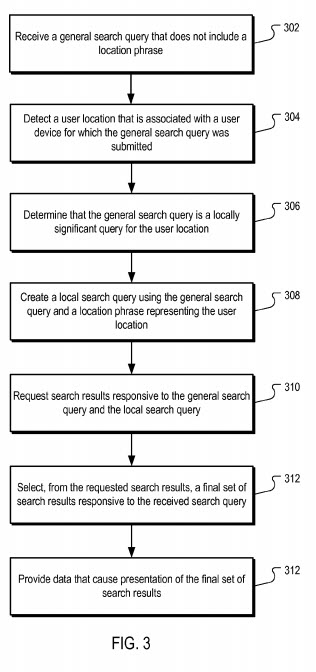

Another hard-core technical post. Bill Slawski dives into whether or not Google uses click-through-rate to determine what sites appear in the SERPs. This is a common SEO debate, but this post uses Google patents to back up the following (admittedly underwhelming) conclusion:

Google does have some patents that illustrate how they might use clicks upon specific searches to determine what results they might display in search results, and where those might be ranked.

Whether or not Google uses information about click throughs seems to be something that they have been denying, and we can’t be certain about. Google has told us that just because they have a patent on something doesn’t necessarily mean they are using what is described in that patent

Adwords Doubles the Amount of Structured Data Displayed In Ads

This equation continues to be more and more true with each piece of news that comes out about it:

Google <3 Adsense > Organic Search.

Which, duh. One makes them a ton more money than the other.

The newest piece of news that backs this up relates to the increased data that an ad can display:

Google has now doubled the amount of structured information that can be shown with text ads, giving advertiser’s an additional line of structured information to work with.

This can be accomplished through selecting two predefined “Headers” — which serve as your structured snippets — and then customizing those headers with two sets of values. The two sets of information now have the possibility of being displayed at the same time.

Phantom III (a.k.a. Quality Update)

http://blog.searchmetrics.com/us/2015/12/02/quality-update-phantom-3/

All SEOs have crystal balls and name generators when it comes to algorithm updates…

Continuing with the “Phantom” algorithm update name that actually turned out to be basically the same thing that Google called the “Quality” update, there’s been some activity popping up recently that could be a new implementation of this previous quality update.

Searchmetrics.com has an interesting post on this new update, and what some of the possible implications are. It’s not all doom and gloom. This update seems to be really rewarding some sites that get the quality content thing right, and sorted out some awkward situations, such as:

While the Phantom Update II punished pages that exhibited duplicate or very similar content, the new guidelines say that for specific topics duplicate content is no longer a problem. Google offers the example of song lyrics: the text always remains the same, but this content is not duplicated. Users can compare the accuracy and quality of presentation of the results they find.

The same logic seems to apply for dictionaries. After the Phantom Update Merriam Webster witnessed a 13% loss in visibility, among the biggest losers. Following this update, however, Merriam Webster is the single biggest winner in Google US search results, gaining 398,016 points in SEO Visibilty. This increase almost recovers the loss in visibility due to the Phantom Update.

And here is your weekly kitty: